In SQL queries, we do use Group by clause to apply aggregate functions.

- But what is the purpose behind using numeric value instead of columnname with Group by clause? For example: Group by 1.

MySQL - How To Get Top N Rows per Each Group. This code is executed for each row and stores the value of country column to @currentcountry. = IF(@currentcountry = country, @countryrank + 1, 1) In this code, if @currentcountry is the same we increment rank, otherwise set it to 1. For the first row @currentcountry is NULL.

ursitesionursitesion

6 Answers

This is actually a really bad thing to do IMHO, and it's not supported in most other database platforms.

The reasons people do it:

- they're lazy - I don't know why people think their productivity is improved by writing terse code rather than typing for an extra 40 milliseconds to get much more literal code.

The reasons it's bad:

- it's not self-documenting - someone is going to have to go parse the SELECT list to figure out the grouping. It would actually be a little more clear in SQL Server, which doesn't support cowboy who-knows-what-will-happen grouping like MySQL does.

- it's brittle - someone comes in and changes the SELECT list because the business users wanted a different report output, and now your output is a mess. If you had used column names in the GROUP BY, order in the SELECT list would be irrelevant.

SQL Server supports ORDER BY [ordinal]; here are some parallel arguments against its use:

Aaron Bertrand♦Aaron Bertrand

MySQL allows you to do

GROUP BY with aliases (Problems with Column Aliases). This would be far better that doing GROUP BY with numbers.- Some have

column numberin SQL diagrams. One line says: Sorts the result by the given column number, or by an expression. If the expression is a single parameter, then the value is interpreted as a column number. Negative column numbers reverse the sort order.

Google has many examples of using it and why many have stopped using it.

To be honest with you, I haven't used column numbers for

ORDER BY and GROUP BY since 1996 (I was doing Oracle PL/SQL Development at the time). Using column numbers is really for old-timers and backward compatibility allows such developers to use MySQL and other RDBMSs that still allow for it.RolandoMySQLDBARolandoMySQLDBA

Consider below case:

You've to find out the number of downloads per service per day considering Apps and Applications as the same service. Grouping by

date, services would result in Apps and Applications being considered separate services.

In that case, query would be:

And Output:

But this is not what you want since Applications and Apps to be grouped is the requirement. So what can we do?

One way is to replace

Apps with Applications using a CASE expression or the IF function and then grouping them over services as:But this still groups services considering

Apps and Applications as different services and gives the same output as previously:Grouping over a column number allows you to group data on an aliased column.

And thus giving you desired output as below:

I've read many times that this is a lazy way of writing queries or grouping over an aliased column does not work in MySQL, but this is the way of grouping over aliased columns.

This isn't the preferred way of writing queries, use it only when you really need to group over an aliased column.

imVJimVJ

There is no valid reason to use it. It is simply a lazy shortcut specially designed to make it difficult for some hard-pressed developer to figure out your grouping or sorting later on or to allow the code to fail miserably when someone changes the column order.Be considerate of your fellow developers and don't do it.

BriteSpongeBriteSponge

This is worked for me. The code groups the rows up up to 5 groups.

The result will be as following

Paul White♦

ship shuk - www.shipshuk.comship shuk - www.shipshuk.com

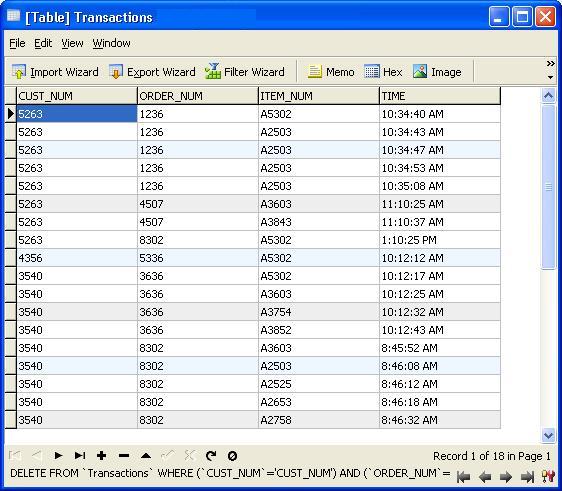

Consider above queries:Group by 1 means to group by the first column and group by 1,2 means to group by the first and second column and group by 1,2,3 means to group by first second and third column. For eg:

this image shows the first two columns grouped by 1,2 i.e., it is not considering the different values of dep_date to find the count(to calculate count all distinct combinations of first two columns is taken into consideration) whereas the second query results this

image. Here it is considering all the first three columns and there different values to find the count i.e., it is grouping by all the first three columns(to calculate count all distinct combinations of first three columns is taken into consideration).

Paul White♦

codercoder

Not the answer you're looking for? Browse other questions tagged mysqlplsqlgroup-bysyntax or ask your own question.

I wrote a post recently about DISTINCT and GROUP BY. It was a comparison that showed that GROUP BY is generally a better option than DISTINCT. It's on a different site, but be sure to come back to sqlperformance.com right after..

One of the query comparisons that I showed in that post was between a GROUP BY and DISTINCT for a sub-query, showing that the DISTINCT is a lot slower, because it has to fetch the Product Name for every row in the Sales table, rather than just for each different ProductID. This is quite plain from the query plans, where you can see that in the first query, the Aggregate operates on data from just one table, rather than on the results of the join. Oh, and both queries give the same 266 rows.

Now, it's been pointed out, including by Adam Machanic (@adammachanic) in a tweet referencing Aaron's post about GROUP BY v DISTINCT that the two queries are essentially different, that one is actually asking for the set of distinct combinations on the results of the sub-query, rather than running the sub-query across the distinct values that are passed in. It's what we see in the plan, and is the reason why the performance is so different.

The thing is that we would all assume that the results are going to be identical.

But that's an assumption, and isn't a good one.

I'm going to imagine for a moment that the Query Optimizer has come up with a different plan. I used hints for this, but as you would know, the Query Optimizer can choose to create plans in all kinds of shapes for all kinds of reasons.

In this situation, we either do 266 Seeks into the Product table, one for each different ProductID that we're interested in, or 121,317 Seeks. So if we are thinking about a particular ProductID, we know that we're going to get a single Name back from the first one. And we assume that we're going to get a single Name back for that ProductID, even if we have to ask for it hundred times. We just assume we're going to get the same results back.

But what if we don't?

This sounds like a isolation level thing, so let's use NOLOCK when we hit the Product table. And let's run (in a different window) a script the changes the text in the Name columns. I'm going to do it over and over, to try to get some of the changes in between my query.

Now, my results are different. The plans are the same (except for the number of rows coming out of the Hash Aggregate in the second query), but my results are different.

Sure enough, I have more rows with DISTINCT, because it finds different Name values for the same ProductID. And I don't necessarily have 295 rows. Another I run it, I might get 273, or 300, or possibly, 121,317.

It's not hard to find an example of a ProductID that shows multiple Name values, confirming what's going on.

Clearly, to ensure that we don't see these rows in the results, we would either need to NOT use DISTINCT, or else use a stricter isolation level.

The thing is that although I mentioned using NOLOCK for this example, I didn't need to. This situation occurs even with READ COMMITTED, which is the default isolation level on many SQL Server systems.

You see, we need the REPEATABLE READ isolation level to avoid this situation, to hold the locks on each row once it has been read. Otherwise, a separate thread might change the data, as we saw.

But… I can't show you that the results are fixed, because I couldn't manage to avoid a deadlock on the query.

So let's change the conditions, by making sure that our other query is less of a problem. Instead of updating the whole table at a time (which is far less likely in the real world anyway), let's just update a single row at a time.

Now, we can still demonstrate the problem under a lesser isolation level, such as READ COMMITTED or READ UNCOMMITTED (although you may need to run the query multiple times if you get 266 the first time, because the chance of updating a row during the query is less), and now we can demonstrate that REPEATABLE READ fixes it (no matter how many times we run the query).

REPEATABLE READ does what it says on the tin. Once you read a row within a transaction, it's locked to make sure you can repeat the read and get the same results. The lesser isolation levels don't take out those locks until you try to change the data. If your query plan never needs to repeat a read (as is the case with the shape of our GROUP BY plans), then you're not going to need REPEATABLE READ.

Arguably, we should always use the higher isolation levels, such as REPEATABLE READ or SERIALIZABLE, but it all comes down to figuring out what our systems need. These levels can introduce unwanted locking, and SNAPSHOT isolation levels require versioning that comes with a price as well. For me, I think it's a trade-off. If I'm asking for a query that could be affected by changing data, then I might need to raise the isolation level for a while.

Ideally, you simply don't update data that has just been read and might need to be read again during the query, so that you don't need REPEATABLE READ. But it's definitely worth understanding what can happen, and recognising that this is the kind of scenario when DISTINCT and GROUP BY might not be the same.